Google Veo 4: What to Expect From Google's Next Video Model

Google Veo 4 hasn't been officially announced yet. But if you've been following the AI video space, you know Google's release cadence is consistent and with the pressure from newer models pushing cinematic quality to new heights, the next generation of the Veo series feels close. So let's talk about what Veo 4 could bring to the table, where the Veo series currently stands, and what you can already do with AI video generation right now.

Where the Veo Series Stands Right Now

To understand where Veo 4 might go, you first need to know where the series has been. Veo 3 was a significant moment. It introduced native audio generation directly into the video output, which was a big deal. Before that, adding audio to AI-generated video meant a completely separate pipeline. Veo 3 collapsed that into a single workflow.

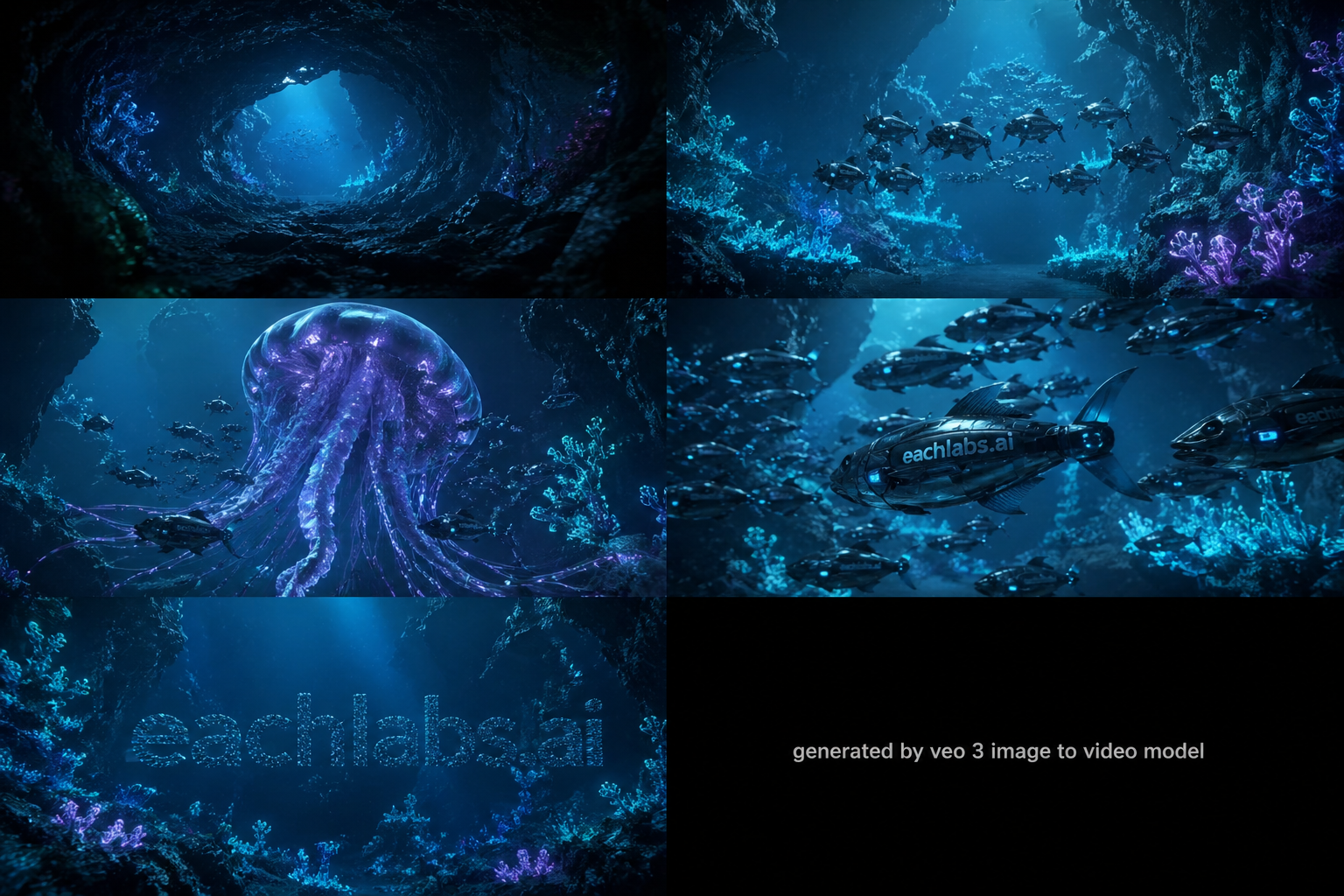

In a glowing alien underwater cave, robotic fish move in sync and form a logo as a bioluminescent creature drifts by. Generated by Veo 3 fast image-to-video model.

Veo 3.1 pushed things further. It improved image-to-video quality, bumped output to 1080p, and refined the cinematic motion that made the series stand out. The model handles physics realistically, prompt adherence is strong, and the overall visual quality holds up against anything else in the space. For creators who need polished, high-motion footage without a full production setup, Veo 3.1 is a genuinely useful tool.

A frog and a turtle host a laid-back swamp podcast, joking about a famous race as fireflies glow around them at dusk. Generated by Veo 3.1 image-to-video model.

That's the baseline. Veo 4 has a strong foundation to build on and a very competitive field to compete against.

What Google Veo 4 Could Bring

Longer Video Duration

Veo 3.1 caps at 8 seconds per clip. That's a real limitation for anyone trying to build narrative content. The entire industry is pushing toward longer, coherent output and Veo 4 is widely expected to extend this significantly, potentially to 10 or even 15 seconds in a single pass without stitching or abrupt cuts. That would be a meaningful step forward for short drama creators and filmmakers who need more than a quick moment.

A runner sprints past the camera, excitedly sharing news about a new release before continuing the race. Generated by Veo 3.1 text-to-video fast model.

Native 4K Resolution

Right now, 1080p is the ceiling for most AI video models. Native 4K where every pixel is generated from scratch rather than upscaled would be a clear differentiator. Google has the infrastructure to make this happen, and given the trajectory of the series, it's a reasonable expectation for Veo 4.

Persistent Character Consistency

One of the biggest pain points in AI video today is keeping the same character consistent across multiple scenes. If you generate five clips of the same character, you often end up with five slightly different versions of that character. Veo 4 might introduce persistent character anchoring. A system where you lock a character's appearance and it stays consistent across every clip in your project, regardless of angle, lighting, or movement. This would open up much more serious storytelling workflows.

Advanced Camera Controls

Right now, cinematic camera techniques like dolly zoom, crane shots, steadicam tracking, and rack focus are largely left to chance in most models. You can describe them in a prompt and sometimes the model follows through but it's not reliable. Veo 4 could introduce explicit camera control parameters that make these techniques actually predictable. For filmmakers and advertisers, this would be a major step toward using AI video in real production pipelines.

Storyboarding

Rather than generating isolated clips, a storyboarding feature would let you define a sequence of scenes with different prompts, camera angles, and character actions and have the model generate a unified video that follows the narrative structure you've laid out. This shifts AI video from a tool for making clips to a tool for making actual structured content.

What We Know About the Veo Series Right Now

The Veo series has consistently delivered on a few things: strong physics realism, solid prompt adherence, and native audio generation that actually works. Veo 3's audio was synchronized from the start, not dubbed after the fact. That's not a small thing it means the dialogue, sound effects, and ambient noise are all generated together with the video, not layered on top afterward.

Prompt adherence is another area where the series has stood out. When you write a detailed prompt with specific camera directions, character descriptions, and scene environment, the model actually follows through on those details. That consistency between what you write and what you get is what separates a useful production tool from an impressive demo.

Physics realism is the third pillar. Fast motion, environmental interactions, fluid dynamics the Veo series handles these in a way that makes the footage feel grounded. A character moving through a space behaves like a character moving through a space, not like a texture sliding across a background.

What the series has been working toward with each release is the kind of cinematic consistency that makes AI video useful for real production work. Longer clips, persistent characters, and explicit camera control are all part of that same direction. Veo 4 is expected to continue on that path.

What This Means for Creators

If the expected capabilities land, Veo 4 would be relevant for a wide range of use cases.

Short drama is one of the fastest growing content formats right now. There are entire apps and platforms built around it, studios producing it at scale, and audiences consuming it in huge volumes across multiple regions. The demand for short drama content is real and it's only growing.

For short drama creators, Veo 4 could be a strong fit. Longer clip duration is the obvious reason right now, building a short drama scene means generating multiple short clips and cutting them together, and maintaining character consistency across those clips is a manual challenge. A model that handles this natively changes the workflow significantly.

But character consistency is arguably even more important than clip length for this use case. Short drama lives and dies on recognizable characters. Your audience needs to follow the same face, the same expressions, the same presence across every scene. If the model drifts on character details between clips, the whole narrative falls apart. Persistent character anchoring in Veo 4 would mean uploading your character reference once and trusting the model to keep it locked throughout your entire project.

Add native audio generation on top of that synchronized dialogue, natural lip sync, ambient sound all generated together and Veo 4 starts to look like a genuinely complete short drama production tool. Not a tool that helps with one part of the workflow. The whole thing.

For advertisers and brand teams, advanced camera controls and native 4K output would matter most. Being able to specify exact camera behavior and trust that the model follows through is the difference between AI video as a prototyping tool and AI video as a production tool.

For social media content creators, longer clips with built-in audio generation mean less post-production work and more time actually creating.

In a warm, futuristic café, a barista crafts coffee as friends share a heartfelt toast to creativity, with rain and city lights glowing outside.

How to Use Google Veo 4 on Eachlabs

Veo 4 is coming to Eachlabs soon. Once it's live, you'll be able to access it directly on the platform alongside other leading video models. We'll update this post as soon as it's available.

In the meantime, you can explore the current generation of AI video models on Eachlabs and start building your prompting skills because the fundamentals carry over. Clear scene descriptions, explicit camera directions, structured prompt flow these work with every strong video model, and they'll work with Veo 4 too.

Tips for Getting the Best Results When Veo 4 Arrives

Write With Camera Direction in Mind

The Veo series has always responded well to cinematic language. When Veo 4 launches, that will still be true and with more explicit camera controls potentially built in, being specific about your angles will matter even more. "Low-angle tracking shot," "overhead aerial view," "rack focus from foreground to background" these aren't just stylistic preferences, they're instructions the model reads.

Structure Your Prompt Like a Shot List

Don't write one long undifferentiated description. Set up the opening shot, describe the cut, specify the next angle, define the action in each segment, and close with the final frame. The model uses that structure to pace the editing rhythm of the generated video. A structured prompt produces a structured video.

Think in Scenes, Not Clips

If the storyboarding feature lands as expected, Veo 4 will let you think in full scenes rather than individual clips. Start planning your content that way define the beginning, middle, and end of your scene before you write a single prompt. That mental model will translate directly into better outputs.

Use Character References Early

If persistent character anchoring is part of Veo 4, uploading your character reference at the start of your project and locking it before generating any clips will be the right workflow. Consistency across scenes starts with a consistent reference.

Wrapping Up

The Veo series has been one of the strongest threads running through AI video generation from Veo 3's native audio to Veo 3.1's cinematic image-to-video output. Veo 4 is expected to push that further with longer clips, native 4K, persistent character consistency, and more precise camera controls. Nothing is officially confirmed yet, but the direction is clear. When Veo 4 becomes available on Eachlabs, we'll have everything you need to get started.

Frequently Asked Questions

Is Veo 4 officially announced?

Not yet. Veo 4 hasn't been officially announced by Google DeepMind. What we know is based on the trajectory of the Veo series, competitive pressure from other leading video models, and general industry direction. When an official announcement is made, we'll update this post with confirmed details.

What's the difference between Veo 3, Veo 3.1, and Veo 4?

Veo 3 introduced native audio generation alongside video. Veo 3.1 improved image-to-video quality, pushed output to 1080p, and refined cinematic motion. Google Veo 4 is expected to extend clip duration significantly, add native 4K output, improve character consistency across scenes, and introduce more explicit camera control though none of this is officially confirmed yet.

When will Veo 4 be available on Eachlabs?

Veo 4 is coming to Eachlabs soon. We'll update this page as soon as it's available on the platform.