DreamActor V2: Images Into Motion Videos

AI video generation has been changing fast over the past few years. Now models can make scenes from text prompts create movie-like videos and even make environments look real. There is one thing that is still really hard to do: making a picture move naturally without changing what is in it.

This is where ByteDance DreamActor V2 comes in.

DreamActor V2 does not make a new video from nothing. Instead it does something controlled that is often more useful for people making things. It takes a picture and a video then makes the character in the picture move like the person in the video.

So the character in your picture starts to move, gesture and show emotions like the person in the video.

This makes a lot of things possible for people making things. A picture of someone can turn into a talking video a cartoon character can move like a person or a picture of a pet can look like it is alive.

DreamActor V2 makes sure the character and background in the picture stay the same while the movement looks real.

Let us take a look at how DreamActor V2 works and why it is becoming a useful tool for people working with characters.

What Is DreamActor V2?

DreamActor V2 is a model made by ByteDance that uses AI to make videos. It takes a picture as an input. Makes the character in it move by using movement from another video.

The way it works is really simple.

You give it two things:

A picture with the character you want to move

A video that shows the movement, expressions and gestures you want

DreamActor V2 looks at the movement in the video. Applies it to the character in the picture.

So the video it makes keeps:

The character looking

The picture looking the same

The background of the picture the same

At the same time it makes the character move, gesture and show emotions like the person in the video.

The final video is 720p and 25 frames per second. It looks like the character from the picture is moving like the person in the video.

This is called motion driving or pose transfer. Dreamactor V2 is really good, at making it look stable and consistent.

Key Capabilities of DreamActor V2

DreamActor V2 introduces several features that make it stand out compared to many other motion-driven video generation tools.

Motion Transfer From Video to Image

DreamActor V2 can take motion from a video and put it into a still image in a very natural way. It does not create a character for every frame. Instead it looks at the video frame by frame. Takes out the movement patterns. This includes things like how the body's posed, hand gestures, where the head is looking, facial expressions and even small lip movements.

When DreamActor V2 understands how the character in the video moves it puts those movement patterns into the character in the image. The goal is not to make the person from the video but to make the character in the image do the things.

What is really interesting is how DreamActor V2 keeps the character in the image looking the same. The face, clothes and how the character looks stay the same while the motion is added on top. You do not see a face in every frame. You see the same character come to life and do the movements.

Because of this the video does not look like it was completely made from scratch. It looks like the image itself is moving. The character looks like it is moving naturally in the scene and still looks like the person, pet or character from the original image.

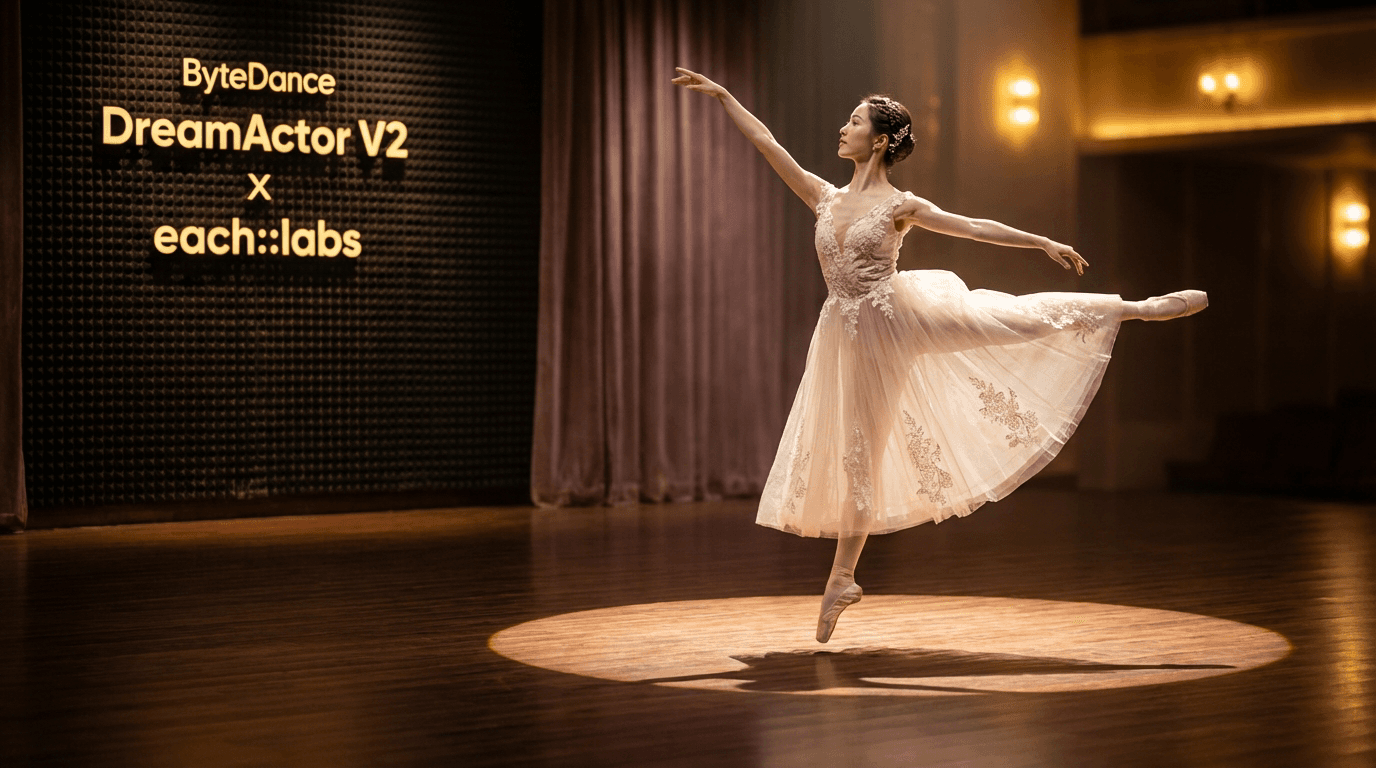

DreamActor example showing strong character and background preservation during animation.

Strong Character and Background Preservation

One of the problems with animation is keeping things looking the same over time. When a model makes a video frame by frame small changes can add up. Change how a character looks. This is called identity drift, where the face, clothes or background slowly change from one frame to another.

DreamActor V2 is designed to stop this from happening. It focuses on keeping the parts of the image the same. Of making everything new each frame it uses the image as a reference and tries to keep the important things intact while adding motion.

This means it tries to keep:

the face looking

the clothes looking the same

the background looking the same

So the video looks more coherent. The character looks like the person throughout the whole clip and the background looks the same too.

This is really important for people who want to animate portraits, mascots or characters without seeing them change during the animation.

Multi-Character Driving

DreamActor V2 can also animate characters at the same time. Many models can only animate one character, which can limit how they are used in stories or group scenes.

DreamActor V2 can look at the movement patterns from the video. Put them into many characters in the image. This makes it possible to animate scenes where many characters do the thing.

For example imagine an image with a group of characters standing. By using a video of someone doing a gesture or movement the model can put that movement into each character in the image. The characters may look like they are doing the gesture or reacting together in the scene.

This makes it possible for creators to make videos where many characters interact, react or move together. For people making media animations storytelling visuals or character-driven scenes this feature can make the animation process much easier.

Example of multi-character driving generated with DreamActor.

Non-Human Character Driving

DreamActor V2 can animate characters that're not human. This includes characters like:

illustrated figures

stylized avatars

animals

fantasy creatures

Because the model looks at pose and motion it can put movement patterns into characters that do not look like humans.

For instance a stylized character illustration can get the gestures and body language from a person in the video. Even though the character may look very different from a human the motion still looks real because it is based on real-world movement patterns.

For people working with storytelling, character design or stylized visuals this can be very useful. It lets them animate characters quickly without needing animation tools.

Example of non-human character driving generated with DreamActor.

CG, 3D and Pixar-Style Character Animation

DreamActor V2 also works well with characters from CG or 3D environments. Many creators make stylized characters that look like animation styles from films or games and these characters can also be animated using the model.

Examples include:

Pixar-style characters

stylized animated pets

3D mascots

game-inspired characters

Imagine having an image of a cartoon dog designed in a stylized 3D style. By using a video of someone doing gestures or expressions DreamActor V2 can put those movements into the dog. The result is an animation where the character looks like it is reacting, gesturing or moving naturally while still looking like the original design.

This can be useful for types of creative content. Brands could animate mascot characters for promotional clips. Social media creators could make character reactions. Designers could bring stylized characters to life without building animation tools.

Because the model keeps the character design while adding motion the animation still feels connected to the original artwork. Of replacing the design the model just gives it movement.

This approach lets static characters become animated moments, with much less effort than traditional animation workflows would need.

Example of CG and Pixar-style character animation generated with DreamActor.

Best Practices for Getting Better Results

When you use DreamActor V2 it works well if you prepare the input materials carefully.

Like AI systems DreamActor V2 needs good input to get the best results.

Here are some tips that can help you get results from DreamActor V2.

Match Character Framing

The character in the picture and the character in the template video should look similar.

They should have body proportions and framing.

For example if the reference video shows a character from the waist up the input picture should show the character from an angle.

This helps DreamActor V2 create stable motion.

Avoid Rapid Camera Motion

If the template video has camera movement it can create unstable motion.

For the results the reference video should have clear and smooth character movement.

Large camera shifts or frequent scene changes can make the generated animation less stable.

Use High-Quality Template Videos

DreamActor V2 is really about how the quality of the template video makes a difference. The model learns motion from this video. Puts it into the image so the clearer the video is, the better the animation looks.

DreamActor V2 can work with kinds of videos. The template video can be as small as 200×200 or as big as 2048×1440. It can be up to 30 seconds long. The model will still work with quality videos but higher quality ones usually give better results.

The reason for this is simple. When the template video is clear the model can see things like finger movement, facial expressions, eye direction and lip motion more easily. This helps the model track these things better.

For example if the template video shows hand gestures DreamActor V2 can copy those gestures more precisely in the animation. The same thing happens with expressions. If the face, in the video is clear and well-lit the model can see how the mouth moves how the eyebrows shift or how the head tilts when someone is talking.

It also helps to use videos where the character's easy to see and not blurry. If the video is blurry or has problems it can be hard for the model to understand the motion. This can make the animation look less stable or the gestures may not look right.

So even though the model can work with different kinds of videos I think it is a good idea to use high-quality videos with DreamActor V2. Clear videos give the model information, which usually makes the motion look smoother and the animation more realistic.

Keep Characters Visible

The character in the picture should be fully visible and facing forward.

If the character is blocked by something it can cause problems for DreamActor V2.

For example if the character has their arms crossed behind their body or if there are objects covering their face it can cause problems.

Reducing these blockages helps DreamActor V2 maintain stability.

Input and Output Specifications

DreamActor V2 is pretty simple to use.

It supports the following inputs:

- Image

- Formats: JPG, JPEG, PNG

- File size: under 4.7MB

- Template Video

- Formats: MP4, MOV, WEBM

- Resolution: 200×200 to 2048×1440

- Maximum duration: 30 seconds

Resolution: 480×480 to 1920×1080

DreamActor V2 can work with people, animated characters, pets and stylized subjects.

Try DreamActor V2 on Eachlabs

If you want to try DreamActor V2 you can use it on Eachlabs.

You can upload your picture and template video run DreamActor V2 and see how the motion transfer works.

This makes it easy to experiment with inputs and see how DreamActor V2 works with different characters.

You can try things like animating a portrait with expressions creating short animated clips from illustrated characters and generating motion for CG or stylized pets.

If you want to try motion-driven video generation without building animation pipelines trying DreamActor V2 on Eachlabs is a good place to start.

Wrapping Up

DreamActor V2 is a bit different from AI video generation models.

Of creating videos from scratch it focuses on transferring motion from a reference video to a still image.

This helps preserve the characters identity and visual details.

DreamActor V2 has features like -character driving, non-human animation and strong pose replication, which make it interesting for creators working with character-based content.

While it may not replace animation pipelines it provides a faster and more accessible way to bring static visuals to life.

As motion-driven AI tools continue to evolve models like DreamActor V2 are showing how image-based animation can become a creative tool.

Frequently Asked Questions

What's DreamActor V2?

DreamActor V2 is an AI video generation model that animates characters from a single image by transferring motion from a reference video.

Can DreamActor V2 animate non-human characters?

Yes DreamActor V2 can animate non-human characters, including animated characters, pets and stylized figures.

What resolution does DreamActor V2 output?

DreamActor V2 generates videos in 720p resolution at 25 frames, per second.