Bytedance Seedream V5 Lite: Text to Image Guide

Most text to image models take your prompt and interpret it in one pass. They guess at what you mean, apply their training distribution, and produce something that is approximately what you described. Bytedance Seedream V5 Lite does something different before it generates anything: it thinks.

Released on February 24, 2026 as part of ByteDance's Seedream 5.0 architecture, the Lite variant is the first model in the Seedream family to apply Chain of Thought reasoning to the generation process. Before a single pixel is produced, the model breaks down your prompt into components material properties, lighting physics, spatial relationships, compositional intent resolves any ambiguities, and then generates. The result is output that reflects what you actually described rather than a statistically likely interpretation of your keywords.

Add real-time web search integration on top of that, and you have a text to image model that can produce a marketing poster with a current brand logo, a topical visual that references a recent event, or a landmark rendered with accurate architectural detail not from training data that might be months out of date, but from live information pulled at generation time.

What Is Bytedance Seedream V5 Lite?

Bytedance Seedream V5 Lite is a text to image model developed by ByteDance and available on Eachlabs. It belongs to the Seedream 5.0 family and sits in the Lite tier meaning it prioritizes generation speed and efficiency without compromising on output quality. Average run time is 50 seconds, which is fast enough to make genuine iteration practical rather than something you plan your schedule around.

The model outputs up to 2K resolution in a range of aspect ratios including 16:9, 1:1, and custom formats. Output files are PNG or JPEG, optimized for both web and print use. The category is Text to Image no video, no image editing, no multi-modal inputs. You write a prompt, the model generates an image.

What distinguishes it from other fast text to image tools is the combination of three things that rarely appear together: Chain of Thought reasoning that improves output coherence and prompt adherence, real-time web search that keeps generated content accurate and current, and physics-based rendering that handles light refraction, material translucency, and global illumination with a level of realism that most generative models approximate rather than simulate.

How Bytedance Seedream V5 Lite Works

The Chain of Thought approach is worth understanding because it changes what prompts can actually accomplish. In a standard generation pipeline, your prompt goes in and the model samples from its learned distribution of what images matching those keywords tend to look like. Ambiguous prompts produce averaged or generic results because the model has no mechanism for resolving what you actually meant.

Bytedance Seedream V5 Lite adds a reasoning step before generation. The model reads your prompt and reasons through it what is the primary subject, what are the material properties being described, what is the lighting situation, what is the compositional intent, what details are most critical to preserve. It resolves the prompt into a coherent visual specification before committing to generation. This is what allows complex, layered prompts the kind that specify subject, environment, lighting, texture, and composition simultaneously to produce coherent output rather than a blend of competing visual signals.

The real-time web search integration works alongside this reasoning step. For prompts that reference specific real-world entities brand logos, landmarks, current events, recent products the model pulls live information rather than relying on training data. A poster prompt that includes a specific company's branding can use the current logo. A scene set in a recognizable city landmark can reference the accurate architecture. This is a genuinely different capability from models that can only generate what they were trained on.

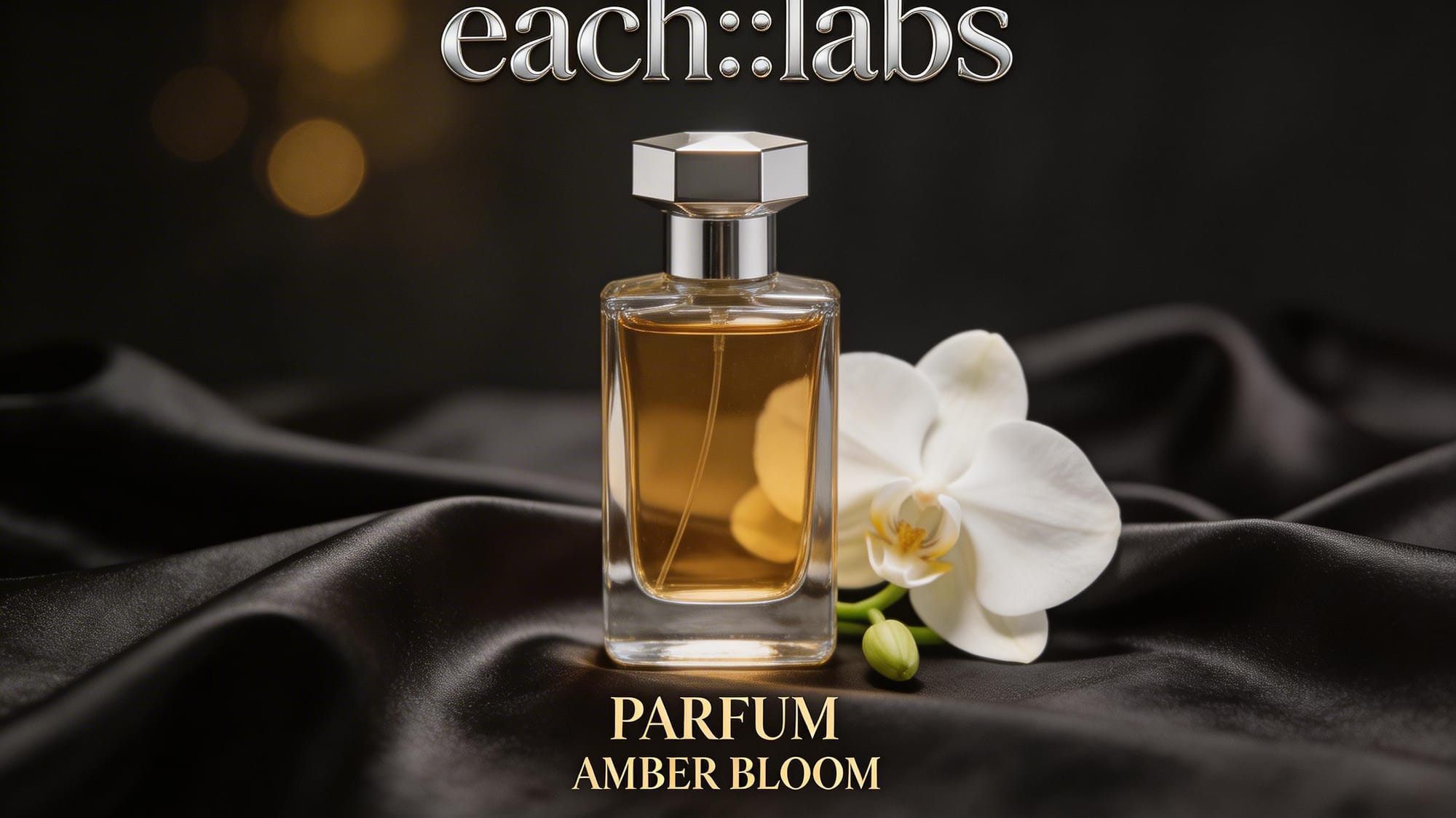

The physics-based rendering layer handles the visual qualities that separate convincing images from technically competent ones. Light refraction through glass, global illumination bouncing off metallic surfaces, translucency in organic materials, accurate shadow behavior these are computed properties rather than textures applied from training examples. A glass object in a Bytedance Seedream V5 Lite image bends light the way glass actually bends light.

Key Features of Bytedance Seedream V5 Lite

Chain of Thought Reasoning Before Generation

This is the feature that sets Bytedance Seedream V5 Lite apart from comparable speed-tier text to image models. The model does not jump from prompt to output. It reads, reasons, resolves, then generates. For creators writing detailed prompts with multiple interconnected visual requirements, this means the generated image reflects the actual intent of the description rather than averaging across its components.

The practical difference shows up most clearly in prompts that specify several simultaneous constraints a specific material, a particular lighting quality, a defined spatial relationship between elements, a style reference. Without reasoning, these constraints compete. With Chain of Thought, they inform each other, and the output addresses all of them as a unified visual specification.

Real-Time Web Search Integration

Most text to image models are frozen in time. Whatever was in their training data is what they know. Bytedance Seedream V5 Lite connects to live information, which means prompts that reference current events, recent products, specific brand identities, or contemporary landmarks can be generated with accurate, up-to-date visual information rather than the model's approximation of what those things looked like when it was trained.

For marketing teams producing time-sensitive visual content, this matters. For designers creating assets that reference current brand standards, this matters. For any workflow where visual accuracy to current real-world entities is part of the brief, the live search integration removes a significant limitation that most generative tools impose.

Physics-Based Material and Light Rendering

The rendering quality in Bytedance Seedream V5 Lite reflects actual physical behavior rather than learned texture patterns. Light refracts through glass the way physics says it should. Metal surfaces scatter and reflect according to their material properties. Velvet shows the directional sheen that comes from fiber orientation. Water holds the translucency and internal scattering that makes water look like water rather than a blue surface.

This is the technical foundation that makes outputs from this model usable in professional contexts where photorealism is evaluated carefully. The example prompt on the Eachlabs page a high-fashion editorial portrait with sculptural headpiece, deep jewel tones, razor-sharp eye focus, and dramatic sculpted studio lighting is a demanding brief that requires accurate skin texture rendering, complex fabric material behavior, and precise lighting physics to work. The model handles all of this from a text description.

Fast Generation at 50-Second Average

Speed in a text to image model is not just a convenience feature. It determines whether iteration is practically possible within a working session. At 50 seconds average, Bytedance Seedream V5 Lite enables genuine creative exploration trying multiple prompt approaches, refining specific elements, comparing compositional options rather than committing to a single generation and waiting for results.

For developers building applications that surface image generation to end users, 50-second generation puts the user experience in a range where real-time interaction feels plausible. For designers and marketers doing rapid concept development, 50 seconds means you can generate, evaluate, and adjust multiple times before presenting a direction.

2K Resolution Output

Output resolution caps at 2K, which covers the full range of screen-based distribution contexts from mobile to desktop and most print applications up to moderate sizes. For marketing visuals, social media assets, web content, editorial imagery, and medium-format print, 2K is the right working resolution. For large-format print where the output will be inspected at close range, it is the ceiling though in practice most text to image generation at this quality level is used in contexts well within 2K's capabilities.

Real World Use Cases

The combination of Chain of Thought reasoning, real-time web search, and physics-based rendering opens up use cases that simpler text to image tools handle poorly.

Marketing visual production is one of the clearest applications. A team producing campaign imagery can generate product placement visuals, lifestyle scenes, and brand-adjacent content with the model's real-time search ensuring that any referenced brand elements, locations, or cultural touchstones are rendered accurately. The physics-based rendering means product images look like the product rather than an AI's generalized interpretation of it.

Editorial and publication imagery uses Bytedance Seedream V5 Lite to generate topical visuals for content that references current events or contemporary subjects. The real-time search integration is the specific capability that makes this viable a generated illustration for an article about a recent development can reference actual visual information rather than producing something anachronistic.

Concept art and creative development benefits from the Chain of Thought reasoning. Complex scene briefs with multiple simultaneous visual requirements a specific lighting environment, a particular material quality, a defined compositional structure produce coherent results rather than generic approximations. Game developers, film production teams, and product designers use this for rapid visualization of creative directions before committing to more expensive production.

Brand asset creation uses the combination of real-time search and precise prompt adherence to generate on-brand imagery that references current visual standards. Marketing teams building a content library can generate variations of approved visual concepts while maintaining brand consistency across the generated assets.

Developers building applications that include image generation features integrate Bytedance Seedream V5 Lite via the API on Eachlabs. The 50-second average run time makes it practical for applications where users expect results within a reasonable wait, and the quality ceiling is high enough to serve professional contexts rather than just casual creative use.

How to Use Bytedance Seedream V5 Lite on Eachlabs

The playground for Bytedance Seedream V5 Lite on Eachlabs accepts a text prompt, a resolution setting, an aspect ratio selection, and optional advanced controls. The workflow is simple the complexity sits in the prompt rather than the interface.

Write your prompt with specificity across the dimensions that the model's reasoning layer can work with: subject, material properties, lighting quality, compositional structure, and style reference. The model responds to physical descriptions "light refracting through glass," "global illumination on a metallic surface," "velvet with directional fiber sheen" because it has the rendering capability to execute them. Generic descriptors like "beautiful" or "high quality" add less to the output than specific physical and compositional direction.

For prompts that reference real-world entities logos, landmarks, current events, specific products write the reference clearly. The real-time search integration works best when the reference is specific enough for the search to return accurate results. "The Eiffel Tower at golden hour" works better than "a famous Parisian landmark" because the specific name gives the search layer an unambiguous query.

Resolution and aspect ratio should match your distribution context before you generate rather than after. Generating in 1:1 and then cropping to 16:9 wastes image area and introduces compositional problems. Set the format that fits your end use.

Tips for Getting the Best Results

Describe Physical Properties, Not Just Visual Outcomes

The physics-based rendering in Bytedance Seedream V5 Lite responds to material and light descriptions that specify the underlying physics rather than just the desired look. "Light refracting through curved glass onto a marble surface" gives the model a physically defined scenario to render. "Glowing glass on stone" gives it a visual outcome to approximate. The first prompt uses the model's rendering capability; the second relies on its learned associations.

Layer Your Prompt Structurally

The Chain of Thought reasoning works best when the prompt is organized around coherent visual components rather than keyword lists. Subject first, then environment, then lighting, then material detail, then style and composition. This structure matches the way the model's reasoning layer processes the description from broad scene definition to specific visual constraints. A prompt organized this way tends to produce more coherent results than one that mixes all elements together without structure.

Use Real-Time Search Cues Specifically

For prompts that need current accuracy brand logos, recent architecture, contemporary cultural references be specific enough that the search integration has a clear query to work with. The model's web search capability returns accurate results when the reference is unambiguous. Vague references produce generic approximations instead of accurate current depictions.

Run Multiple Variations Before Committing

At 50 seconds per generation, running three or four variations of a prompt approach is practical within a short working session. Rather than refining a single prompt iteratively through many versions, generate several distinct approaches different compositional structures, different lighting setups, different material emphases and evaluate them as a set before deciding which direction to develop further.

Test Aspect Ratio Early

Composition works differently across aspect ratios. A prompt that produces a well-balanced 1:1 image may produce awkward 16:9 framing where the subject feels lost in a wide landscape. Test your prompt at the target aspect ratio from the first generation rather than discovering the compositional problem after several refinement iterations.

Wrapping Up

Bytedance Seedream V5 Lite is a text to image model that actually thinks before it generates. Chain of Thought reasoning, real-time web search, and physics-based material and light rendering combine in a Lite-tier model that runs in 50 seconds and outputs at 2K resolution. For marketing teams, designers, creative directors, and developers who need accurate, detailed, promptable image generation at a speed that supports real iteration, it is worth trying on Eachlabs today.

Frequently Asked Questions

What makes Bytedance Seedream V5 Lite different from other text to image models?

Three things set it apart from comparable speed-tier models. First, Chain of Thought reasoning the model analyzes and resolves your prompt before generating, which produces more coherent output from complex, multi-layered descriptions. Second, real-time web search integration the model pulls live information for prompts that reference current brands, events, or landmarks, producing accurate depictions rather than relying on potentially outdated training data. Third, physics-based rendering materials and light behave according to actual physics rather than learned texture approximations, which is why glass refracts light correctly and metallic surfaces scatter the way real metal does.

What resolution does Bytedance Seedream V5 Lite output?

The model outputs up to 2K resolution in PNG or JPEG formats, with support for standard aspect ratios including 16:9, 1:1, and custom ratios. This covers the full range of screen-based distribution from mobile to desktop, most web and editorial publishing contexts, and medium-format print applications. For ultra-large-format print where fine detail will be inspected at close range, the 2K ceiling is worth factoring into your workflow planning.

How does the real-time web search integration work in practice?

When your prompt references a specific real-world entity a brand logo, a contemporary landmark, a recent event the model performs a live search to retrieve accurate current visual information rather than drawing only from its training data. This means generated images can reflect current brand standards, recent architectural changes, or contemporary cultural elements accurately. The integration works best when your prompt references the entity specifically by name rather than through a vague description, because a specific name gives the search layer an unambiguous query to return accurate results from.