inference · 44.4s

inference · 44.4sInstant ID Generate Avatar

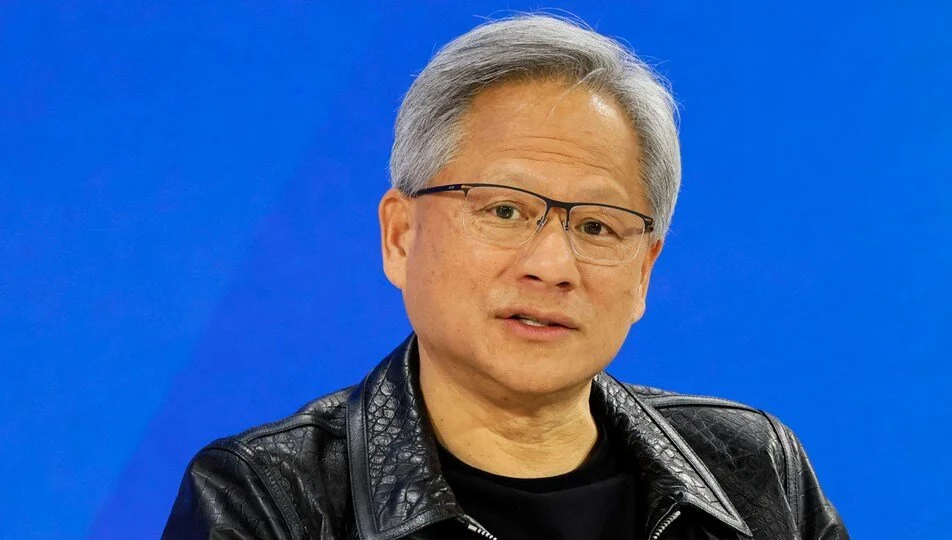

Instant ID is making realistic images of real people instantly

- Runtime (p50)

- 32s

- Estimated price

- $0.00108 / sec

Overview

instant-id — Image-to-Image AI Model

instant-id from Tencent delivers realistic image-to-image transformations, enabling instant generation of photorealistic depictions of real people from reference photos and text prompts. Part of Tencent's instant-id family, this image-to-image AI model excels at preserving facial identity and details, solving the challenge of creating consistent, high-fidelity portraits without extensive training data. Developers and creators searching for Tencent image-to-image solutions find instant-id ideal for rapid, accurate edits in e-commerce and content pipelines.

Powered by Tencent's advanced multimodal architecture, instant-id supports seamless reference-based generation, producing outputs that maintain intricate facial features, expressions, and lighting from input images. This makes it a go-to for image-to-image AI model applications requiring identity consistency across modifications.

Capabilities

High-Quality Output

The model excels in generating visually stunning images across diverse styles and resolutions.

Style Adaptability

Choose from a wide array of artistic weights to achieve desired aesthetic outcomes.

Precision Controls

Leverage pose, canny, and depth controls to craft outputs with fine detail and alignment.

Use cases

Use Cases for instant-id

E-commerce developers building automated image editing API tools can upload a product photo with a model and prompt "transform this portrait to wear a red evening gown in a sunset beach scene while keeping the exact face and smile," generating catalog-ready visuals instantly without reshoots.

Content creators use instant-id for social media personalization, feeding a selfie plus "age this person 20 years with silver hair and professional attire in an office," to produce hyper-realistic aging effects that preserve unique identity traits for viral storytelling.

Marketers targeting branded campaigns leverage its identity consistency by inputting executive headshots and specifying "place this face on a diverse team in a modern conference room with company logo," streamlining diverse representation without identity loss.

App designers integrating edit images with AI features create avatar customizers, where users provide a photo and describe "add cyberpunk neon tattoos and glowing eyes," yielding coherent, high-quality results for gaming and AR experiences.

Tips & tricks

How to Use instant-id on Eachlabs

Access instant-id through Eachlabs' Playground for quick tests with reference images and text prompts, or integrate via API and SDK for production-scale image-to-image AI model workflows. Provide a face image, descriptive prompt, and optional parameters like style strength or resolution; expect high-res PNG/JPG outputs in seconds with preserved identity details. Eachlabs simplifies deployment for all instant-id API needs.

---Technical spec

What Sets instant-id Apart

instant-id stands out in the competitive landscape of image-to-image AI models through its specialized focus on instant identity preservation, leveraging Tencent's Hunyuan-inspired multimodal framework for superior facial fidelity without per-subject fine-tuning.

- Zero-shot identity retention: Maintains precise facial structures, skin tones, and expressions from a single reference image, even under style changes or environmental shifts—enabling reliable person-specific edits that generic models often distort.

- Multimodal token modeling: Processes text and image inputs in a unified autoregressive framework with 80B parameters (13B active MoE), delivering enhanced prompt adherence and world-knowledge integration for contextually accurate transformations.

- Efficient high-resolution output: Supports detailed generations up to high resolutions with fast inference, optimized for real-time workflows like AI photo editing for e-commerce, where speed meets quality.

Unlike diffusion-heavy competitors, instant-id's architecture ensures structural coherence and multilingual prompt handling, making it a top choice for precise instant-id API integrations.

Things to be aware of

Generate a photorealistic portrait using stable-diffusion-xl-base-1.0 with fine-tuned controlnet settings.

Experiment with anime-inspired outputs using anime-art-diffusion-xl.

Combine pose control with a well-defined prompt to create dynamic, action-packed scenes.

Adjust guidance_scale and pose_strength to observe how the model interprets intricate instructions.

Key considerations

Prompt Quality: Clear, descriptive prompts lead to better results. Use negative_prompt to explicitly exclude undesired features.

Pose and Depth Control: Ensure pose and depth input images align with the desired output structure for effective conditioning.

Safety Checker: Enabling or disabling the safety checker impacts output filtering. Use discretion when disabling it.

Limitations

Performance Variability: Results may vary significantly based on input prompt and style selection.

Pose Limitations: Poorly aligned or low-quality pose images can reduce output fidelity.

Complex Scenes: Highly intricate prompts may result in unexpected outputs or artifacts.

Controlnet Dependencies: Overuse of controlnets can sometimes overly constrain the creative potential of the model.

Output Format: PNG