FLUX-2

Flux 2 [klein] 4B from Black Forest Labs enables precise image-to-image editing using natural-language instructions and hex color control.

Avg Run Time: 10.000s

Model Slug: flux-2-klein-4b-base-edit

Playground

Input

Output

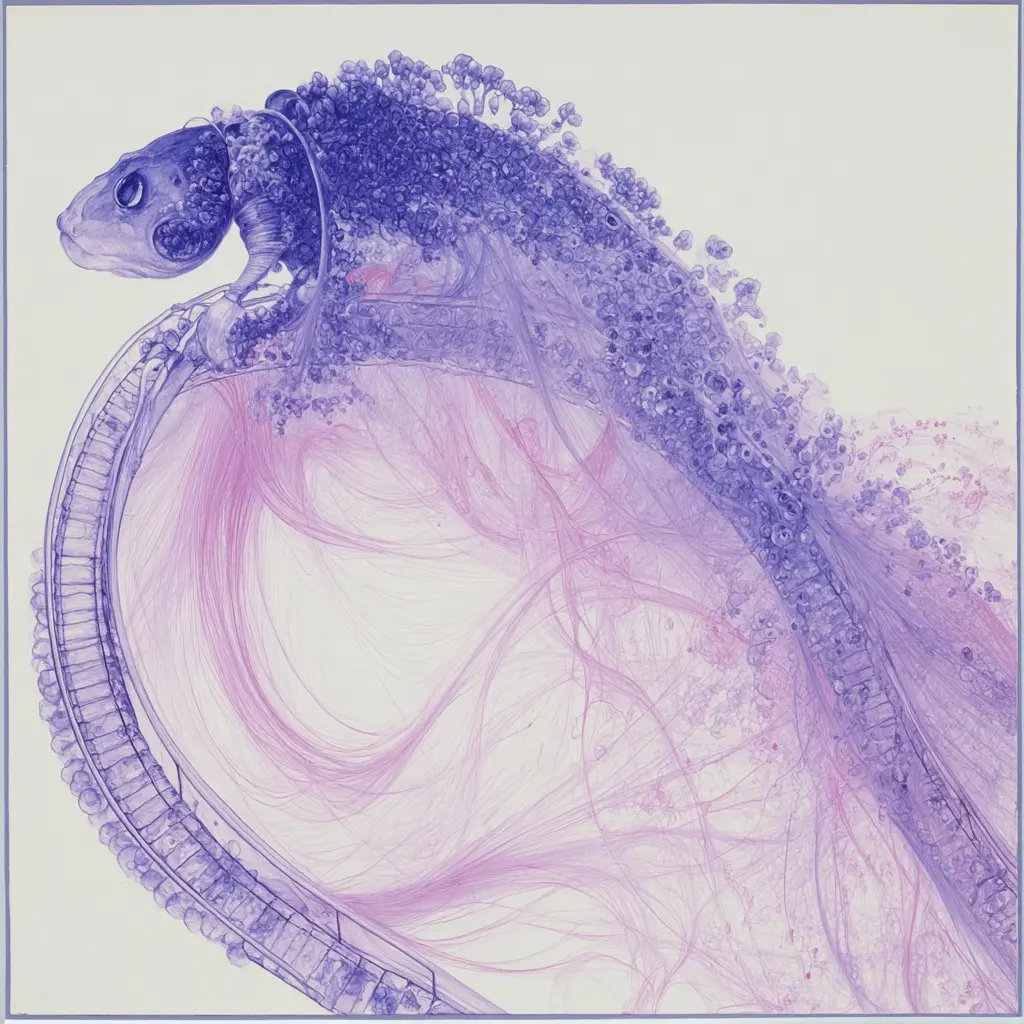

Example Result

Preview and download your result.

API & SDK

Create a Prediction

Send a POST request to create a new prediction. This will return a prediction ID that you'll use to check the result. The request should include your model inputs and API key.

Get Prediction Result

Poll the prediction endpoint with the prediction ID until the result is ready. The API uses long-polling, so you'll need to repeatedly check until you receive a success status.

Readme

Overview

flux-2-klein-4b-base-edit — Image Editing AI Model

Developed by Black Forest Labs as part of the Flux 2 family, flux-2-klein-4b-base-edit is a compact image editing model that transforms how creators refine and modify visual content. Unlike generic image editors, this model accepts natural-language instructions paired with reference images, enabling precise semantic edits—from object replacement to style transformation—without requiring manual selection tools or complex workflows. The 4B base variant prioritizes output quality and customization flexibility, making it ideal for creators who need professional-grade results without sacrificing control over the editing process.

What makes flux-2-klein-4b-base-edit distinct is its unified architecture: it handles both image generation and image-to-image editing within a single model, eliminating the need to switch between tools. Built on a rectified flow transformer with a Qwen3-based text encoder, it delivers sub-second inference on consumer GPUs while maintaining the complete training signal—meaning you can fine-tune it for domain-specific applications or train custom LoRA adapters for specialized editing tasks.

Technical Specifications

What Sets flux-2-klein-4b-base-edit Apart

Precise natural-language image editing with hex color control. Rather than relying on masks or selection tools, flux-2-klein-4b-base-edit accepts text instructions like "change the background to a sunset" or "make the product packaging blue (#0066FF)" and applies edits with semantic understanding. This enables rapid iteration for product photography, marketing content, and design workflows where color accuracy and spatial modifications matter.

Multi-reference image composition and blending. The model supports up to 4 input images simultaneously, allowing you to blend concepts, maintain character consistency across edits, and create complex compositions in a single pass. This capability is particularly valuable for character-focused creative work and iterative design where maintaining visual coherence across multiple references is critical.

Efficient local deployment with exceptional quality-to-size ratio. At 4 billion parameters, flux-2-klein-4b-base-edit runs on consumer GPUs with approximately 12–13GB VRAM (RTX 3090/4070 class hardware), yet produces professional-grade output comparable to much larger models. With quantization support (FP8 and NVFP4), it can operate on 8GB VRAM setups with minimal quality loss, making it accessible for developers building AI image editor APIs and local editing tools.

Technical specifications:

- Resolution: Supports 1024×1024 and higher, capable of generating up to 4MP images

- Inference steps: 25–50 configurable steps (25 for faster edits, 50 for maximum quality)

- Processing time: ~17 seconds on RTX 5090; practical performance on 8–12GB VRAM setups

- Input formats: Text prompts, single or multi-reference images, hex color values for precise color matching

- License: Apache 2.0 (fully open for commercial use)

Fine-tuning and customization without distillation. Unlike distilled variants optimized purely for speed, the base model preserves the complete training signal, enabling LoRA training and custom pipeline development. This makes flux-2-klein-4b-base-edit a strong candidate for teams building specialized image editing workflows—whether for architectural visualization, fashion design, or e-commerce product photography.

Key Considerations

- The Base variant uses 25-50 inference steps, providing superior quality compared to distilled models but requiring more computational resources and longer generation times

- VRAM management is critical; the model requires approximately 12-13GB VRAM for comfortable operation, making it suitable for mid-range consumer GPUs but not entry-level hardware

- Multi-reference editing performance is significantly better on the 9B models; the 4B variant handles single-image edits more reliably than complex multi-image compositions

- Prompt engineering should be precise and detailed for optimal results, particularly when specifying editing instructions or color values

- The model demonstrates high resilience against violative inputs, having undergone third-party safety evaluation prior to release

- For production deployments requiring maximum speed, consider the distilled variants; the Base version prioritizes quality and customization flexibility

- Fine-tuning and LoRA training are viable options with the Base variant due to preserved training signal, but require appropriate GPU resources

- Character consistency and spatial logic are strong points, making the model suitable for character-focused creative work

- Iterative refinement is recommended for complex editing tasks; multiple renders may be necessary for achieving specific multi-image edit results

Tips & Tricks

How to Use flux-2-klein-4b-base-edit on Eachlabs

Access flux-2-klein-4b-base-edit through Eachlabs via the Playground for interactive testing or the API for production integration. Provide a reference image, natural-language editing instructions, and optional parameters like inference steps (25–50) and resolution. The model outputs high-resolution edited images in seconds, supporting both single-image refinements and multi-reference compositions. Use Eachlabs SDKs to integrate flux-2-klein-4b-base-edit directly into your applications, enabling seamless image editing workflows without managing GPU infrastructure.

---END---Capabilities

- Photorealistic image generation from natural language text descriptions with high output diversity

- Precise image-to-image editing using natural language instructions and hex color control

- Multi-reference image editing, allowing users to blend concepts and iterate on complex compositions

- Unified architecture supporting text-to-image, single-image editing, and multi-reference editing without model switching

- Sub-second inference speed on modern consumer hardware, enabling interactive creative workflows

- Strong character consistency and spatial logic for character-focused applications

- Fine-tuning and LoRA training capabilities due to undistilled architecture preserving complete training signal

- High resilience against violative inputs, demonstrated through third-party safety evaluation

- Flexible inference step configuration (25-50 steps) allowing quality-speed trade-off optimization

- Quantization support enabling operation on lower-VRAM hardware without significant quality degradation

- Professional-grade output quality that matches or exceeds larger competing models while using significantly less computational resources

What Can I Use It For?

Use Cases for flux-2-klein-4b-base-edit

E-commerce product photography and lifestyle compositing. Product teams can upload a product photo and provide a text instruction like "place this on a white marble countertop with warm morning light and a coffee cup beside it" to generate photorealistic lifestyle images without studio shoots. The hex color control ensures product packaging colors remain accurate, while multi-reference editing lets you blend multiple product angles into a single composition.

Marketing and social media content iteration. Marketers can rapidly test visual variations—changing backgrounds, adjusting lighting, swapping seasonal elements—by feeding existing campaign images into flux-2-klein-4b-base-edit with natural-language edits. This eliminates the back-and-forth with designers and enables same-day content refreshes for A/B testing across platforms.

Character-focused creative and game asset development. Designers and game developers benefit from the model's strong character consistency and spatial logic when iterating on character poses, clothing, or environmental context. You can edit a character's outfit, pose, or surroundings while maintaining facial features and identity—critical for maintaining visual coherence across multiple asset variations.

Developers building local AI image editing tools. With Apache 2.0 licensing and efficient hardware requirements, developers can integrate flux-2-klein-4b-base-edit into custom applications—from desktop editing software to web-based image editing platforms—without cloud dependencies. The fine-tuning capability enables you to train domain-specific variants for specialized use cases like real estate photo enhancement or medical imaging applications.

Things to Be Aware Of

- The 4B Base model sometimes produces slightly over-processed or "overcooked" results compared to distilled variants, particularly when using maximum step counts

- Multi-image editing consistency can be inconsistent on the 4B model and typically requires multiple renders and careful prompt engineering to achieve desired results

- The model performs significantly better on single-image edits than complex multi-reference compositions; users should manage expectations accordingly

- VRAM requirements of 12-13GB limit accessibility to users with mid-range or higher consumer GPUs; entry-level hardware may struggle

- Generation speed, while sub-second on high-end cards like RTX 5090, increases noticeably on lower-tier consumer GPUs

- The model demonstrates high character consistency and spatial logic, which users consistently report as a major strength in community discussions

- Users report that the model delivers professional-grade quality suitable for production use despite its compact 4B parameter size

- The Apache 2.0 license provides commercial freedom, which users appreciate for business applications

- Community feedback indicates the model represents excellent value for users seeking to run capable image generation locally without cloud dependencies

- Users note that prompt precision significantly impacts editing quality, particularly for color control and spatial modifications

Limitations

- Multi-reference image editing performance is notably weaker than the 9B variants; complex compositions with multiple reference images may produce inconsistent results requiring multiple iterations

- The 4B model is not optimal for commercial text-to-image workflows where maximum quality is the primary concern, as larger models may deliver superior results in some scenarios

- VRAM requirements of approximately 12-13GB restrict deployment to mid-range consumer hardware and above, limiting accessibility for users with entry-level GPUs

Pricing

Pricing Type: Dynamic

Per-megapixel pricing: $0.014 base + $0.001/extra MP (ceil rounding)

Current Pricing

Related AI Models

You can seamlessly integrate advanced AI capabilities into your applications without the hassle of managing complex infrastructure.

Dev questions, real answers.

FLUX.2 Klein 4B Base Edit is Black Forest Labs' compact 4-billion-parameter image editing model in the FLUX.2 Klein family. It performs instruction-guided edits on existing images, including background changes, object swaps, and style adjustments. Its smaller size compared to the 9B variant delivers faster inference at lower cost.

FLUX.2 Klein 4B Base Edit offers fast inference times and lower cost per edit compared to larger FLUX.2 variants, making it well-suited for high-throughput production pipelines. It handles common editing tasks such as background removal, object replacement, and color adjustments efficiently without sacrificing acceptable output quality.

FLUX.2 Klein 4B Base Edit is accessible through the eachlabs unified API with the model ID flux-2-klein-4b-base-edit. Submit a source image alongside an editing instruction to receive a modified output. eachlabs manages Black Forest Labs credentials, enabling immediate access with no separate provider account required.